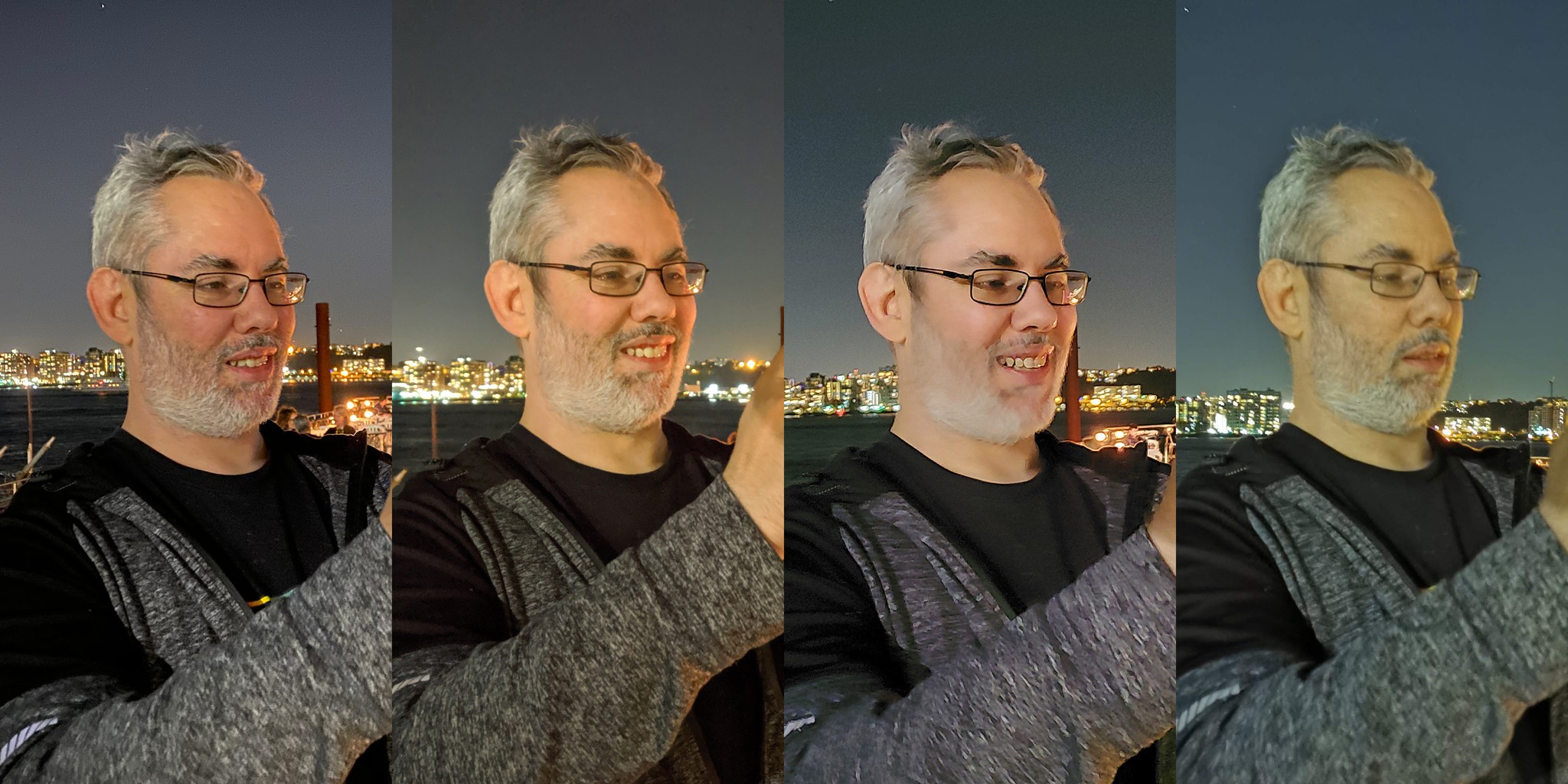

Night Mode Showdown: iPhone 11 vs. Pixel 3 vs. Galaxy Note 10 vs. P30 Pro

iMore offers spot-on advice and guidance from our team of experts, with decades of Apple device experience to lean on. Learn more with iMore!

You are now subscribed

Your newsletter sign-up was successful

There's a new Night King in town. At least that's what some people are saying. As usual, Apple wasn't first to computationally enhanced low light photography, just like they weren't first to multiple cameras or depth effects, or even phones at all.

Now that they are, they're doing it in typical Apple style. It doesn't do everything. You can't force it on manually. You can't use it with the focus-pixel-free ultra-wide-angle. But what you can do, you can do well. With good detail recovery, texture preservation, and tone mapping.

In a really opinionated, maybe even controversial way.

And that's the point. This is as much art as science. Do you drain color in the dark like the human eye or do you boost it? Do you turn night into day or shine a light in the dark? In the end, some aspects are objectively better on some camera phones. Others... are far more subjective.

To figure it all out, I collab'ed up with my friends Michael Fisher, The Mr. Mobile, and Hayato Huseman from Android Central. And we shot the sensors off the Google Pixel 3, Samsung Galaxy S10+, Huawei P30 Pro, and the brand-new iPhone 11 Pro.

Pixel 3

Yeah, yeah, enhanced low-light photography has been a thing for a while now. I'm sure one of you old-school phone nerds will point out in the comments how Nokia or HTC shipped it back in 1812. So, yeah, totally. But it became table-stakes in 2018 when Google announced the feature for the Pixel 3.

Say what you want about Pixel sales numbers, and whether it's mainstream or just geek stream, it made night mode matter. And, in typical Google style, it did it with algorithms.

iMore offers spot-on advice and guidance from our team of experts, with decades of Apple device experience to lean on. Learn more with iMore!

On the Pixel, Night Sight is a separate mode you have to switch to manually. But, of course, that means you can force it on manually any time you want. Tap that tab, and the Pixel will be good to low-light go.

Not that you can tell from the viewfinder. This continues to be my biggest gripe with Google's camera. What you see isn't what you get. So, you're left to frame and focus on whatever you can, as best you can.

When you take the picture though, that's when the magic happens

According to Google, the Pixel averages frames, in other words, exposure stacking. It takes a bunch of full-resolution exposures over the course of up to 6 seconds — how long it takes depends on how dark it is — and then uses the HDR+ system on older Pixels and the Super Res Zoom system on Pixel 3 to average and merge the exposures. That gets you more light and less noise.

Then, they use a machine-learned algo to adjust the white balance, and apply tonal mapping and an s-curve to make the image look more colorful, more even than the human eye can perceive at night.

Once you snap the photo, it'll ask you to hold still and give you a circular progress to show you for how long. When you jump to the photo, it'll take another second or two to render out the final Night Sight image.

Samsung

Samsung's Night mode used to turn on automatically but back in April they updated to add a manual tab. There's no live preview, far as I can tell. So, like Google, what you see is what is, not what you will get. When you hit the button, it tells you to hold steady.

Then, according to Samsung, they lower camera sensitivity and decreases the shutter speed, which could mean a lower signal gain to the sensor to combat noise injected by way of a wider ISO and slower shutter. It's hard to tell because, unlike Google or Apple, Samsung doesn't really talk up the process.

That's why there are a couple of different explanations floating around for what's actually happening. Some say it bursts that get exposure stacked, again like Google, to increase light and decrease noise. Others, that it's just a long exposure. Samsung says tripods help, which suggests there is some long exposure mechanic.

Also, the capture is processed to intelligently reduce noise and balance contrast and light, which seems to preserve a really good amount of texture, even in backgrounds. And it's color corrected to really brighten and saturate shots.

It's really, really fast to take the shot. Saving takes a while, though. There's a progress meter around the shutter button and then it says saving on the viewfinder, which takes a few seconds in total.

P30 Pro

The Huawei P30 Pro has such a monstrous camera sensor on it that you can get a really good amount of low-light simply by taking a regular old photo. You know, like our ancestors used to. But, it also has a computational Night Mode as well. Yeah, a separate tab you can tap into action too — and you can take a picture.

When you shoot, you get about a paragraph of text telling you to hold steady. At that point, Huawei opens up the shutter for up to four seconds. Again, it was hard to find a lot of technical details, but it looks like it's doing multiple shots at multiple exposures and stacking them as well. Then running algorithms to average and merge the results into remarkably clean final images…

… with remarkably terrible color science. Which still seems to be Huawei's jam right now.

You get a countdown and a progress circle to keep you busy while the shot processes, and the viewfinder pulses from black to bright. Then, another progress circle afterward to keep you busy while it's saving.

Once it's done though, your shot is ready.

iPhone 11 / Pro

Apple's new-to-iPhone 11 Night Mode is automatic. Which is great, because it shows up when you need it, but not so great because you can't force it on if and when you want to. When the light goes down, Night Mode comes on. If it gets too dark, it'll switch to the flash. If you disable the flash, it'll stay on and do it's best.

When Night Mode activates, its icon turns yellow. Depending on how dark it gets, it'll show a number from 1 to 3, signifying how many seconds you have to hold the photo in order to capture the image.

You can also tap the icon to reveal manual controls, where you can slide the time left to 0, which turns Night Mode off, or right, which will typically max out at 3 or 4. If you're on a tripod, all the way up to 28 seconds for some serious long exposure or astrophotography.

Like Portrait Mode on the iPhone, the live preview shows the Night Mode result you're going to get before you even take the photo. That way, you can compose it the way you want it — what you see is what you get — but, also, the system can use the same preview feed to start pre-processing the image.

It doesn't work on the new ultra-wide angle lens, which lacks the Focus Pixels — Apple's name for phase adjust auto focus — and optical-stabilization of the wide angle and, on the Pro, the telephoto as well.

When you hit the shot, the the the indicator slides down and the preview goes dark and then brightens, you know, to help you pass the short expanse of time.

All the while, the system is fusing together multiple images, using adaptive bracketing, based on what it determines from the preview. Those brackets can go from very short, if there's more motion, or they can go long, if there's more shadow. That lets hit minimize blur and maximize the amount of detail recovered.

Thanks to semantic rendering, it can also distinguish different parts of the image, including and especially people, and multi-scale tonal map and detail as much as possible.

It takes a second to save after that, and your photo is immediately available.

Who does it better?

So, who's the new Night King? For my money, Apple wins on interface. Surprise, surprise. It's a drag you can't force it into Night Mode any time you want, but once it comes on automatically, from the live preview to the manual adjustment slider, the user experience is just excellent.

For the photos themselves, I think it comes down to a subjective tie between Apple and Google. I prefer Apple's choices here. The color looks warmer where Google sticks to the cooler tones, as usual.

Once in a while the he Pixel looks sharper but not always. Apple, though, can often keep different textures looking like… different textures.

Huawei's camera is legit amazing and it takes great shots in low light without a night mode at all, but it's interface tells instead of shows, it's color consistency just still isn't good, and when it comes to textures it feels like they're just... doing their best.

Samsung comes in last for me for being ok at everything but just not great at anything.

Don't want to take my obviously biased word for it? What the video above to see what Micheal Fisher, TheMrMobile and Hayato Huseman of Android Central

Then, head on over to Android Central's channel for more from Hayato Houseman, and to see iPhone 11 vs. Galaxy Note 10, and Michael Fisher, of course, at TheMrMobile channel, for his day-one iPhone 11 first impressions.

King of the Night?

So, that's my first look at Night Mode, explained, and my first thoughts on how Apple's new iPhone 11 compares to the current champions.

Many are saying it's the new Night King, but now I want to hear from you. But, now I want to hear from you — who's doing Night Mode best for you, and why?

Rene Ritchie is one of the most respected Apple analysts in the business, reaching a combined audience of over 40 million readers a month. His YouTube channel, Vector, has over 90 thousand subscribers and 14 million views and his podcasts, including Debug, have been downloaded over 20 million times. He also regularly co-hosts MacBreak Weekly for the TWiT network and co-hosted CES Live! and Talk Mobile. Based in Montreal, Rene is a former director of product marketing, web developer, and graphic designer. He's authored several books and appeared on numerous television and radio segments to discuss Apple and the technology industry. When not working, he likes to cook, grapple, and spend time with his friends and family.