In pursuit of building blocks and the Big Idea

iMore offers spot-on advice and guidance from our team of experts, with decades of Apple device experience to lean on. Learn more with iMore!

You are now subscribed

Your newsletter sign-up was successful

When we think about building the future, we rarely stumble across the perfect path to do so on the first try. Often, we grasp at perceived futures — ways we expect our world to change and improve — but it's very seldom the best way to build our actual future.

This isn't an admonishment to never strive to create the next big thing; nor is it a bleakly worded yet heartfelt kick in the pants encouraging you to reach for the stars.

You should always reach for the stars and always strive to create the next big thing. But if you hope to succeed, your best guides are those who have gone before you — and failed.

The research kernel of NeXT

NeXT Computer was a big idea that failed.

Its original goal was to create powerful workstation class computers that, finally, incorporated the vision of the future Steve Jobs had seen at Xerox's Palo Alto Research Center back in the early 80s.

Most people today abbreviate the center as Xerox PARC and know what it means. But it's important to remember what that acronym stands for: Palo Alto Research Center. Pure research in the heart of Silicon Valley, in the hands of a company founded in 1906, with its heart still in Rochester, New York.

NeXT planned to take all Xerox's good ideas and bring them to the rest of the world. Well, first "the world" would be educational institutions that could afford a few thousand bucks per computer, but ultimately, the plan was to bring this kind of advanced computing to the masses.

iMore offers spot-on advice and guidance from our team of experts, with decades of Apple device experience to lean on. Learn more with iMore!

At the time, "advanced computing" meant a microkernel architecture, a UNIX personality, object-oriented frameworks, ubiquitous networking using the Internet Protocol, and a user interface that ensured that what you saw on the screen was what you got when you printed it out. It was a laudable goal.

But NeXT failed. By the time the product came to market, it was both too late and too expensive: Companies like Sun and SGI had already taken that market.

NeXT computers had little room to maneuver. The company eventually would become one that merely sold an operating system and a platform for deploying web applications before being bought by Apple — who, at the time, was even worse for the wear.

PostScript problems

Display PostScript was the technology behind the What You See Is What You Get display, along with the printing graphics pipeline on NeXT computers. As you might have guessed from the name, it used Adobe's PostScript graphics and rendering engine — but rather than using it for a laser printer, NeXT used it to display the screen.

Now, there a lot of problems with DPS, all of which contributed to its ultimate failure. For one, paying Adobe money for every computer or copy of an operating system you ship sucks. But the big issue was in the PostScript code: The underlying program was actually a full Turing Machine, which meant that one could write arbitrarily complex programs and they'd evaluate completely logically... even when you screwed up by writing an infinite loop and locked up your output devices.

But NeXT's implementation added an interesting spin on the program: Each app rendered within a window; once those windows had their contents they'd be fully insular and contained. In essence, a user could drag a program window over another non-responsive window without having to worry about that gaudy alert box stamping effect that Windows suffered from. By knowing what was under the alert box when the user moved the window, the computer could redraw its contents rather than asking the application to do so.

Despite this feature, however, Display PostScript went into the dust bin with Developer Preview 3 of Mac OS X. Instead, we got Quartz.

Multitouch mania

Jeff Han is probably best known for his TED Talk introducing multitouch gestures. His work pioneered many of the interactions we take for granted today: Pinch to zoom. Rotation. Multiple points of input rather than a simple mouse cursor.

It was revolutionary. But it also relied upon devices that were beyond the reach of consumers. His work was far from a failure — but neither was it a success.

Putting the pieces together

Looking at the examples above, we can pull out the following big ideas: Small kernel, UNIX personality, retained rendering of application content, multi-touch input.

Small kernel, UNIX personality, retained rendering of application content, multi-touch input.

Small, UNIX, retained rendering, multi-touch.

(Are you getting it yet?)

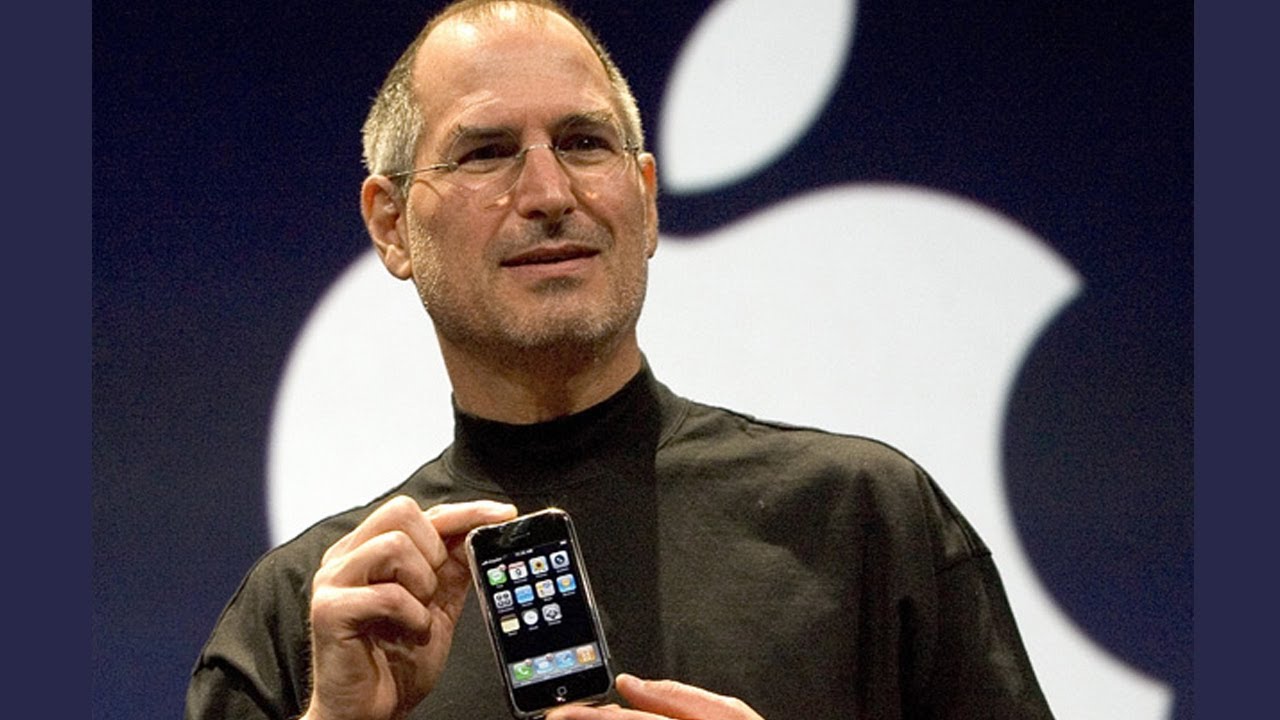

These three big failed ideas helped build a recipe for what we now know as one hugely imaginative idea: the iPhone.

NeXT's core frameworks helped build iOS's communication, while its UNIX Personality layer gave the mobile OS a window into the world of the Internet. Display PostScript's window rendering, paired with modern mobile graphics processors, allowed the iPhone's digital buttons to fade and slide with ease. And multi-touch — it was being able to implement multi-touch on a handheld device that brought these big ideas together.

The phone's success doesn't rest solely on these three features. There's far more to the process than picking three ideas that kind of failed and sticking them together. But without each of these failed attempts — and without someone recognizing the potential within those failures — we wouldn't have the iPhone as we know it today.

What can we learn from big ideas?

When we first dream up big ideas, far more often than not they result in abject failure. But if we're willing to reexamine those ideas after the fact, we can find much value in those missteps: Was the technology premature? In the intervening time, have we seen progress or a new avenue where that big idea could be addressed? Did that big idea fail because of cultural or technical reasons?

Ultimately, The big idea is what it says on the tin. It's a big idea. Cynicism has never served an optimist well.

Is this implementation going to be garbage? You can make pretty safe bets on that. But the big ideas, those that stick. They're mired in their time, immature technology and cultural acceptance. They deserve a nod and a mental note to re-examine them when the context changes. The key isn't the big idea. It's figuring out the context in which it succeeds.

Guy is a longtime game, Mac, and iOS developer. Napkin is the app he co-develops,