A month ago today, Apple announced the iPhone 11 and iPhone 11 Pro, and all the camera features that come with them, from the improved wide-angle to the new ultra-wide, from Dark Mode to the currently-in-beta Deep Fusion.

Now, before the event even happened, I posted a series of videos on this channel explaining exactly, and I mean exactly, why I thought people were missing what was going on with the iPhone camera system, and underestimating what the iPhone 11 camera was going to deliver. But that was based only on analysis.

Since then, I've had the opportunity to test the cameras themselves, in Cupertino, in New York, a couple times, and right here in Montreal. I've put all of them through all of their paces. Against other iPhones and other phones.

So, did Apple deliver on the photo capture and, more importantly to my own interests, was I right?

This is the new iPhone, not the pro, not the low.

The iPhone 11 is more akin to a replacement for the iPhone XS than the iPhone XR. It's powered by the newest processor, has the latest two-lens camera system, and comes in at an affordable starting price. It's the perfect iPhone for most people.

iPhone Camera Showdown: Vs. iPhone 6, iPhone 6s, iPhone 7, iPhone X, iPhone XS

One of the most common questions I'm still getting is whether or not it's worth upgrading to the iPhone 11 just for the camera. But it's not only, or even mainly, nerdy iPhone X or iPhone XS owners that are asking. It's people going all the way back to much older iPhones. You know, all of our friends and family members, trying to decide if it's worth it to upgrade now or just hold on for another year.

So, let's focus on still photography for now — I'll do a follow up on video features … and sure, selfies if you want — and look at what all those cameras can do. That way, it's not just about the latest and the greatest, but about how it compares to what you and yours might have.

Also, bonus: Seeing how Apple has been methodically building up their camera capabilities over the years, I'll also help explain just what and why those capabilities are this year. And, to help keep things manageable in scope and fair on the software side, I'm going to limit it to iPhones that can run iOS 13, which is every iPhone since 2015.

iMore offers spot-on advice and guidance from our team of experts, with decades of Apple device experience to lean on. Learn more with iMore!

To save time, I'm going to shoot all the samples while we chat. Cool? Cool.

iPhone 6s and 12mp

The iPhone 6s was Apple's first 12MP camera, though it kept the same f/2.2 aperture as the 6. With the 50% increase in pixels came a 50% increase in Focus Pixels, which is Apple's name or phase detection autofocus.

That same bigger camera was packed into the smaller iPhone SE as well.

iPhone 7 and telephoto

iPhone 7 brought the effective 28mm wide-angle aperture to f/1.8, letting in 50% more light. They also made the sensor 60% faster and, to maintain edge-to-edge sharpness, Apple added a 6th, curved element to the lens system. The complete imaging pipeline, from capture to management to display, was bumped up from sRGB to the wider DCI-P3 gamut.

The Plus version also added a second, effective 56mm telephoto lens to the system. Also 12MP, but f/2.8 and with no optical stabilization. Apple fused the two cameras to not only pull better image data for every photo, but provide 2x optical zoom and computational portrait mode on the telephoto.

I remember walking across the Brooklyn Bridge with it well after midnight, for the first time letting me get photos I just couldn't have gotten with an iPhone before.

iPhone 8 and X

The iPhone 8 got a new, larger, faster sensor, with deeper pixels, to take in 83% more light, with wider and more saturated color, and less noise. The A11 Bionic image signal processor, or ISP, was also fast enough to start analyzing a scene and optimizing for lighting, people, textures, and motion, before you hit the shutter, and fast enough the high dynamic range, or HDR, could just be turned on and left on. And, on the computational side, Portrait Lighting.

iPhone X brought the telephoto lens to f/2.4 and gave it optical stabilization, allowing it to shoot better low light as well. About a third of a stop better.

They didn't let me get photos I couldn't have before, but they let me get those same photos with even better clarity and detail.

iPhone XS, iPhone XR, and Smart HDR

The iPhone XS wide-angle was both bigger, to drink in more light, and deeper again, to prevent even more cross talk. It also tied the ISP to the neural engine so it could do things like facial landmarking and better masking. It also introduced Smart HDR, which buffered 4 frames, to give you both zero shutter lag and the ability to better isolate motion. It also interleaved underexposed shots to preserve highlights and a long exposure at the end to pull even more detail from shadows, and fuse them all together at the pixel level to get the best single image possible.

Apple also added new virtual lens models to properly simulate real bokeh, and added depth control so you could alter the virtual aperture to get just exactly the look you wanted.

The iPhone XR had exactly the same wide-angle camera but only the wide-angle camera, so it used Focus Pixels and segmentation masks to simulate depth effect on that.

This time, they let me get photos I couldn't have even imagined, sometimes really cool, but sometimes exposing shadows and rendering skin in ways that I didn't want.

iPhone 11 and Deep Fusion

And that brings us to the iPhone 11. It bolsters the wide-angle with a yet another bigger sensor for better light sensitivity, and 100% Focus Pixels. The telephoto has been brought to f/2.0, so it can capture 40% more light as well.

A new, effective 13mm, 120º ultra-wide-angle has also been added the mix, as has a new Night Mode, and a new technology called Deep Fusion, currently in beta.

The ultra-wide-angle doesn't have any Focus Pixels or OIS, and is 5-element instead of 6-element, so there are limits to what it can do, but it can provide depth data, allowing for real portrait mode on the 11 and Portrait Mode on both the wide-angle and telephoto on the 11 Pro. There's a new virtual lens model for the wide-angle to better match our expectations rather than just physical calculations, and high key mono for Portrait Lighting.

Also, semantic rendering, so it can better understand what's in a scene and what to do with it, and multi-scale tone mapping to make Smart HDR even smarter, especially when it comes to skin.

You should just be able to whip out your camera, take a photo, and get the best damn photo.

Up to three cameras — telephoto, wide-angle, and ultra wide-angle, and three different capture methods — Smart HDR, Deep Fusion, and Night Mode – can make the iPhone 11 camera system just sound… well… complicated. But the way Apple has implemented it just wipes a lot of that complexity away.

The basic idea is you shouldn't have to worry or even think about any of it. You should just be able to whip out your camera, take a photo, and get the best damn photo possible regardless of what it is or what the conditions are like when you took it. That's the holy grail here.

But, I know a lot of people, especially people into tech and into cameras, can't just relax and take that photo without knowing exactly what's happening under the covers first.

So, let's break it down.

Understanding the iPhone 11 Cameras

Now, I covered the new cameras and the new interface in my original iPhone 11 reviews, so I won't recapitulate it all here. Suffice it to say, Apple fuses the cameras together at the factory and continually updates all of them, all the time, when you're taking a photo so that you can switch between them with pretty damn remarkable consistency.

It just looks like you're hitting the zoom out for ultra-wide or zoom in for telephoto, but internally, Apple's fusing everything together and sharing all the data, so when you do, the experience is smooth and not jarring, and the exposure, white balance, everything stays the same.

Again, it's the difference between shipping chipsets and feature sets. The capture methods are both simpler in use but also much more complicated behind the scenes. Because the iPhone is always buffering images ahead of time, it can gather image data from them to figure out the best way to shoot under any given conditions.

Smart HDR

Smart HDR is used for effects like Portrait Mode, but also all images on the ultra-wide camera, bright images on the wide-angle, and the very brightest images on the telephoto, like outside in the middle of the day, where the dynamic range can be high and handling harsh contrasts and preserving and pulling maximum detail from highlights and shadows is paramount. Now in its second generation, it's been machine-learned to recognize people and process them.. as people, to maintain the best highlight, shadows, and skin tones possible.

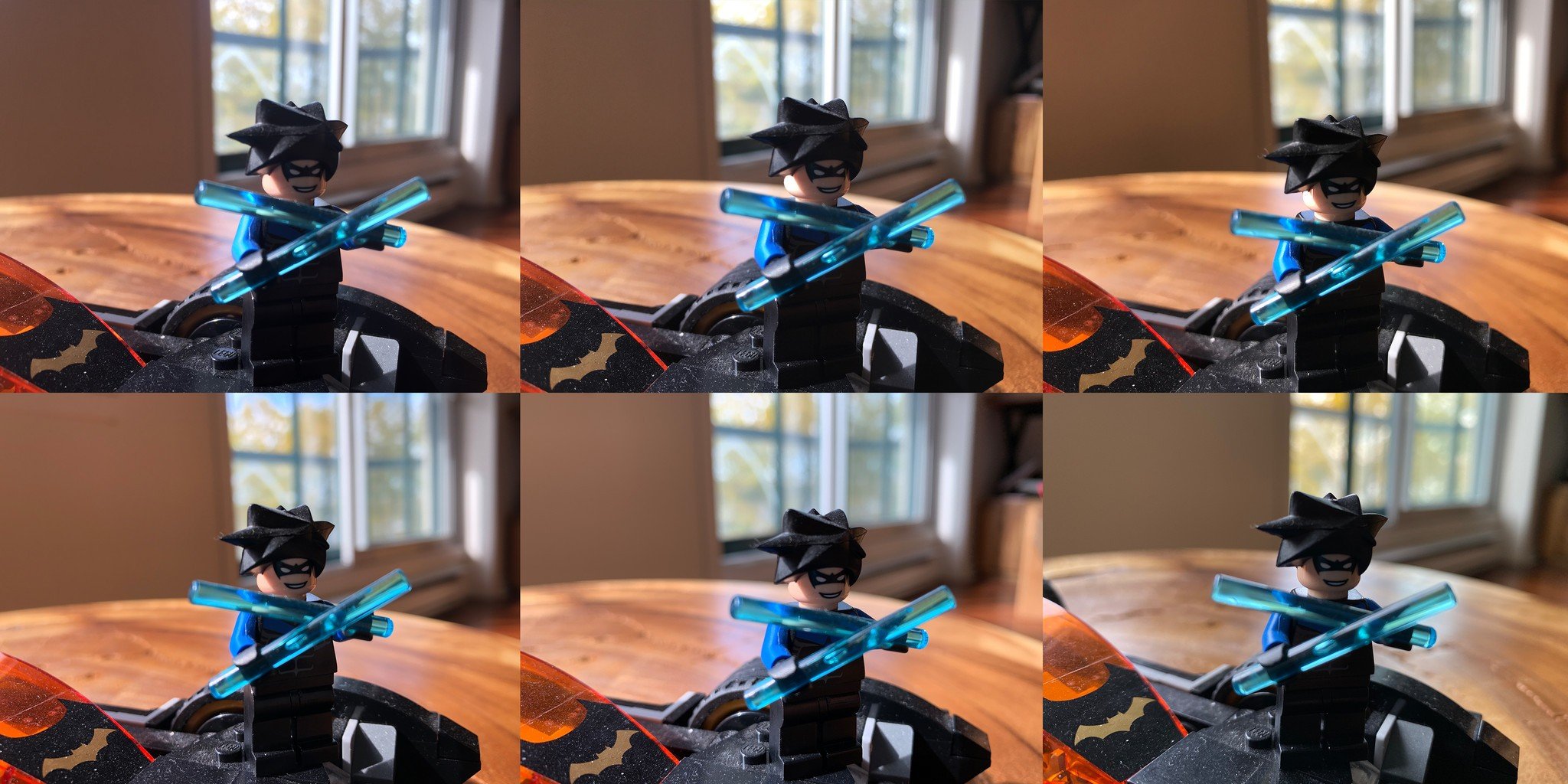

Deep Fusion

Deep Fusion, currently in beta, is used for mid to low-light images, like being inside. The process is … smarter than smart. It takes four standard exposures and four short exposures before capture, and then a long exposure at capture. Then, it takes the best of the short exposures, closest to the time of capture, the one with minimal motion and zero shutter lag, and that gets fed to the machine learning system. Next, it takes the best three of the standard exposures and the long exposure, and fuses that into a single, synthetic long exposure. That gets fed into the machine learning system as well.

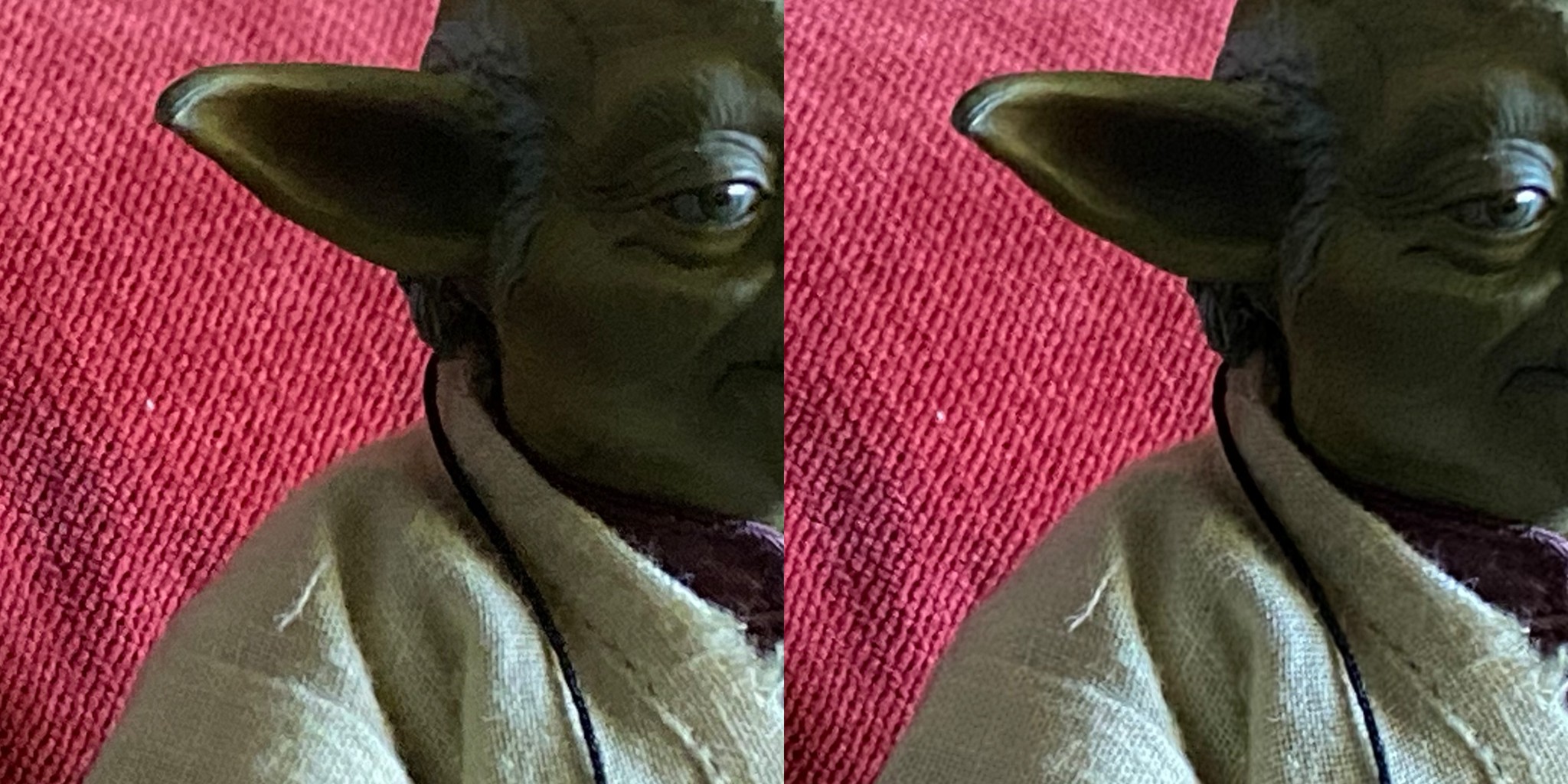

Then, both 12mp images are pushed through a series of neural networks that compares each pixel from each image, within the full context of the image, to put together the best possible tone and detail data for the image, the highest texture with the lowest noise.

It understands faces and skin, skies and trees, wood grain and cloth weave. And optimizes for all of it. The result is a single output image built from all those input images, with the best of all possible sharpness, detail, and color accuracy.

Just like portrait mode is meant to create a level of depth beyond what the relatively small camera system is actually able to deliver, Deep Fusion is meant to capture a level of detail that normally requires big glass and a big sensor. But using big compute instead.

Night mode

Night mode kicks on at below 10 lux on the wide-angle. On the hardware side, it uses that wide-aperture lens and those 100% Focus Pixels to drink in as much light as possible.

On the silicon side, it uses adaptive bracketing, again based on what it determines from the preview. Those brackets can go from very short, if there's more motion, to longer, if there's more shadow. Then it fuses them all together to both minimize blur and maximize the amount of detail recovered.

Like the other methods, Night Mode can also understand what's in a scene, including people, faces, parts of faces, and make sure skin tones keep the best color and detail possible.

I did a whole explainer and shootout between the iPhone 11, Pixel 3, Galaxy Note 10, and P30 Pro, so check the link in the description for way more on all that.

Just click

The important thing, though, is that it all just happens when you hit the shutter. There's no overhead for you to manage, no cognitive load to get between you and the picture you want to capture.

The camera simply determines the best capture method, smart HDR, Deep Fusion, or Night Mode, based on what it's previewing, and then automatically captures using that method.

Now, that does mean you can't manually select the capture method. You can turn off Night Mode on a shot-by-shot basis if you really want to. But, otherwise, the camera is going to camera.

For Portrait Mode or panoramas, those far less time-sensitive and far more deliberate choices. So it makes sense to have to choose them. Otherwise, Apple wants you to be able to pull your iPhone from out of your pocket or up off the table and just take a shot without having to lose time thinking about which method to use or switching between methods and then potentially lose the shot.

To deliver the best shot possible in any given situation, while always increasing the limits of best and extremes of situation.

Same reason why we now have QuickVideo, to save us even having to switch from still to motion when the moment calls for it.

It's all built on all the hardware, software, and silicon Apple has been delivering so methodically year after year, using what's come before to push even further forward.

It's all built on all the hardware, software, and silicon Apple has been delivering so methodically year after year, using what's come before to push even further forward.

Look at the evolution from the iPhone 6s going to 12 megapixels. The iPhone 7 Plus going to f/1.8, DCI-P3, and adding the telephoto for zoom and portrait mode. The iPhone 8 adding computer vision and the neural engine, and the iPhone X improving the telephoto, and both adding Portrait Lighting. The iPhone XR and iPhone XS adding Smart HDR and depth control.

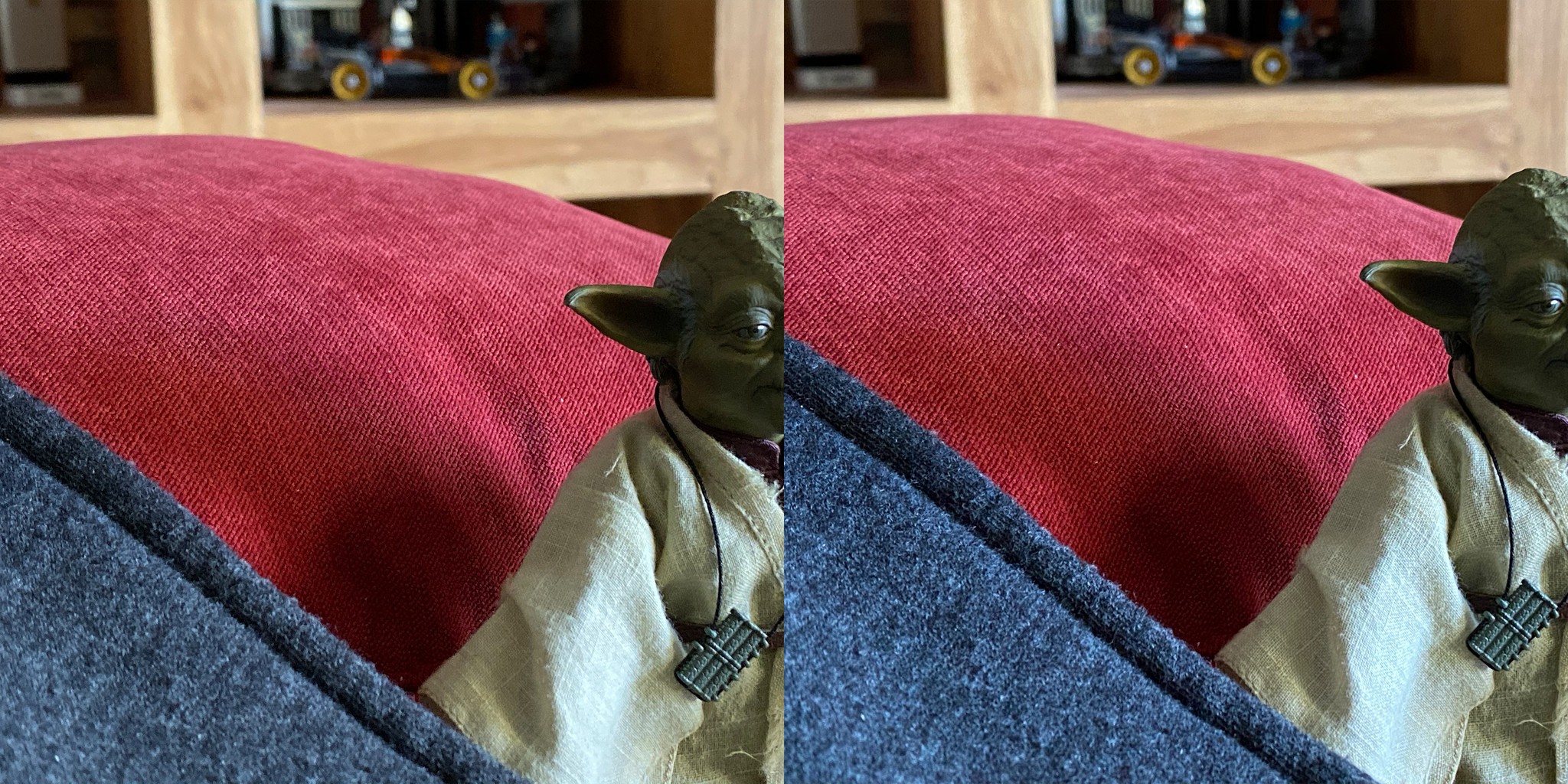

Now, when you look at them compared to the iPhone 11, for regular shots outside and inside, regular and low light, landscapes and macros, portrait mode and night mode, there are a couple of big leaps forward, but also a lot of tiny little steps in between.

What's most impressive to me — where you can really see all of that investment, in everything from color science to management — is, when camera angles and capture modes are changing — how little everything else actually changes.

Sure, some can do more or different than others, just like lenses on a DLSR, but the color and consistency is so well-tuned and maintained, it still always feels like the same camera. And, that's something we haven't really been able to say about phone-based cameras before.

And, you don't just have to take my word for it. Many people in the industry who thought Apple was falling behind in cameras are now saying they're back in the lead.

Should you upgrade?

The iPhone 7 was the most fun I had with a camera phone since the iPhone 4. The iPhone 11 is the most fun I've had since the iPhone 7. But is it enough for you or yours to upgrade?

If photography is at all important to you, and you have the iPhone 6s or SE or older, even up to the iPhone 7 Plus, the difference you get in moving up to the iPhone 11 is very literally like night and day.

If photography is very important to you, and you have the iPhone 8 or even the iPhone X, going to the iPhone 11 can allow you to stretch ultra-wide muscles you never knew you had.

If photography is critical to you, and especially if you didn't like some of the choices Apple made with the iPhone XR or XS Smart HDR, then not only is the iPhone 11 smarter with the HDR, but Deep Fusion and Night Mode will knock your socks off.

And… I won't say I told people so, or even that I informed them thusly, in those videos leading up to the iPhone 11 announcement, but I do love that if you subscribe to this channel and watch those videos, you had all the information and analysis pretty much before anybody else.

But, analysis is one thing. Actual results is another. And, one month later, it's good see Apple delivering on all that investment and all that promise, and in a way that's as reliable as it is consistent and quality, which is also something many other camera phones often still can't say.

Rene Ritchie is one of the most respected Apple analysts in the business, reaching a combined audience of over 40 million readers a month. His YouTube channel, Vector, has over 90 thousand subscribers and 14 million views and his podcasts, including Debug, have been downloaded over 20 million times. He also regularly co-hosts MacBreak Weekly for the TWiT network and co-hosted CES Live! and Talk Mobile. Based in Montreal, Rene is a former director of product marketing, web developer, and graphic designer. He's authored several books and appeared on numerous television and radio segments to discuss Apple and the technology industry. When not working, he likes to cook, grapple, and spend time with his friends and family.