How to use Personal Voice in iOS 17 — create a voice assistant with your own

Personal Voice is easily one of the best features of iOS 17.

- Compatibility: iPhone XR and later

- Release date: September 18

- How to download and install iOS 17

- How to make iOS 17 Contact Posters

- How to use StandBy on iOS 17

- How to leave a FaceTime video message on iOS 17

Personal Voice is one of the most interesting features of Apple's new iOS, iOS 17 and we can't wait to try it on the new iPhone 15 and iPhone 15 Pro.

Essentially, what Personal Voice does is it enables you to train a voice model manually. For those who aren't up to speed on AI terminology, doing this results iin you turning your own voice – or a friend or family member's voice – into a part-time steward of your iOS experience.

Yes, it's a bit weird. But we think that, in a world of countless seemingly opaque AI implementations, Personal Voice is a great opportunity to explore and experience machine learning first hand, so you can see how these things are made. However, it's also a great accessibility feature for those who may have a speech impairment.

Even if you don't want to use Personal Voice all the time, it's still worth having a play around with. In this guide, we'll explore how it works.

How to use Personal Voice

Personal Voice is a new feature found in iOS 17. You’ll find the Personal Voice entry in Settings > Accessibility.

In this sub-menu, you can create a new Personal Voice profile. And iOS 17 lets you have multiple profiles at once, meaning you could voice-map everyone in your household if you like.

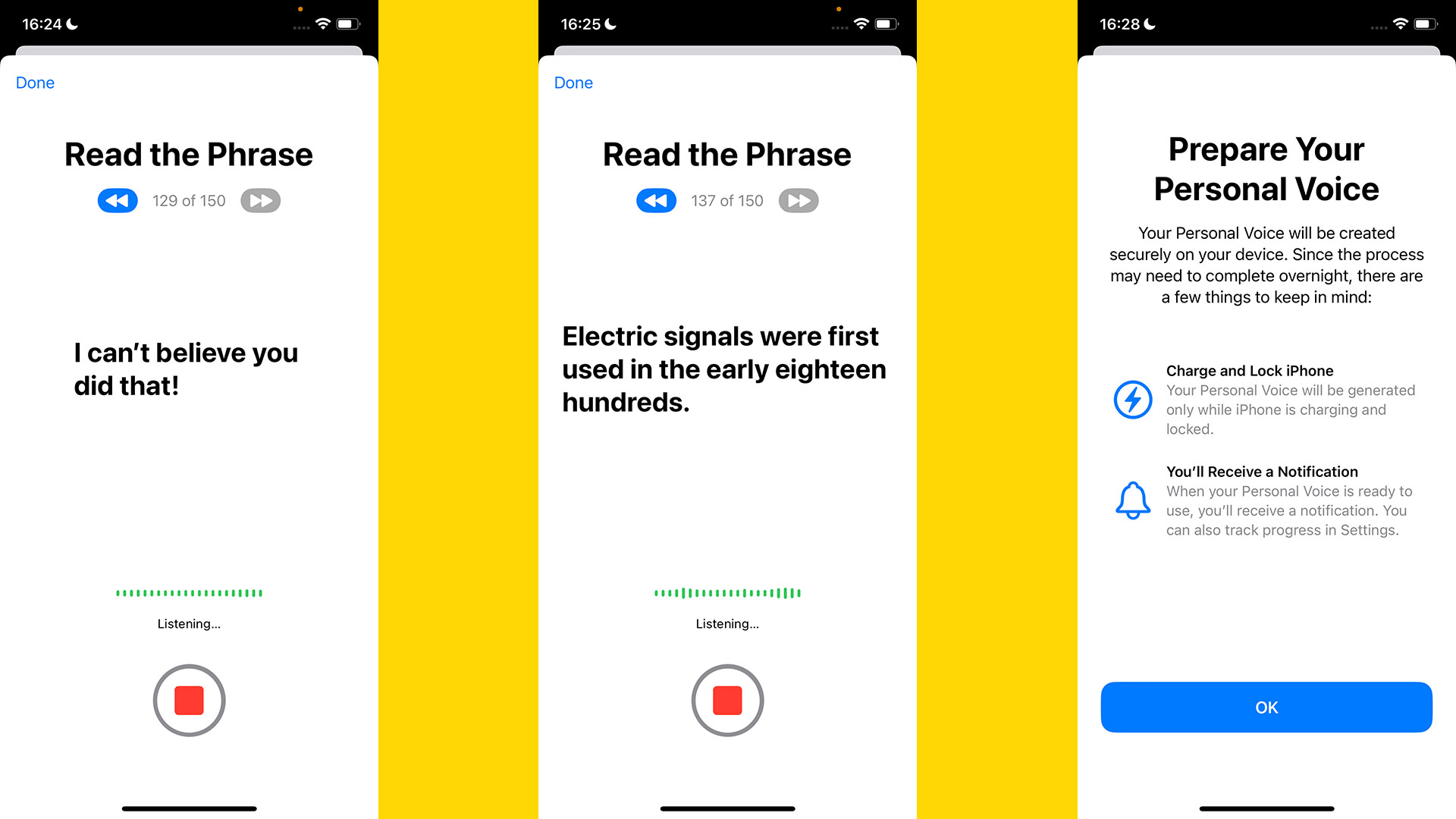

They may not want to do that, though, as the process is lengthy and, for some, awkward. You have to read out 150 phrases, and these are displayed on-screen, one at a time.

iMore offers spot-on advice and guidance from our team of experts, with decades of Apple device experience to lean on. Learn more with iMore!

Apple says it takes 15 minutes, but it’s the sort of intense exercise that makes time appear to stretch out like pizza cheese. You begin to wonder: am I really speaking normally? And you question whether the end of the exercise will ever come. The middle 50% is the worst, so hang in there.

Once you have recorded all the phrases, you have to plug your iPhone in and leave it alone with the screen off. Creating a voice profile from all those phrases must be a processor-intensive job.

Apple suggests leaving it overnight. I just left the phone alone for a few hours during the day, and it was done.

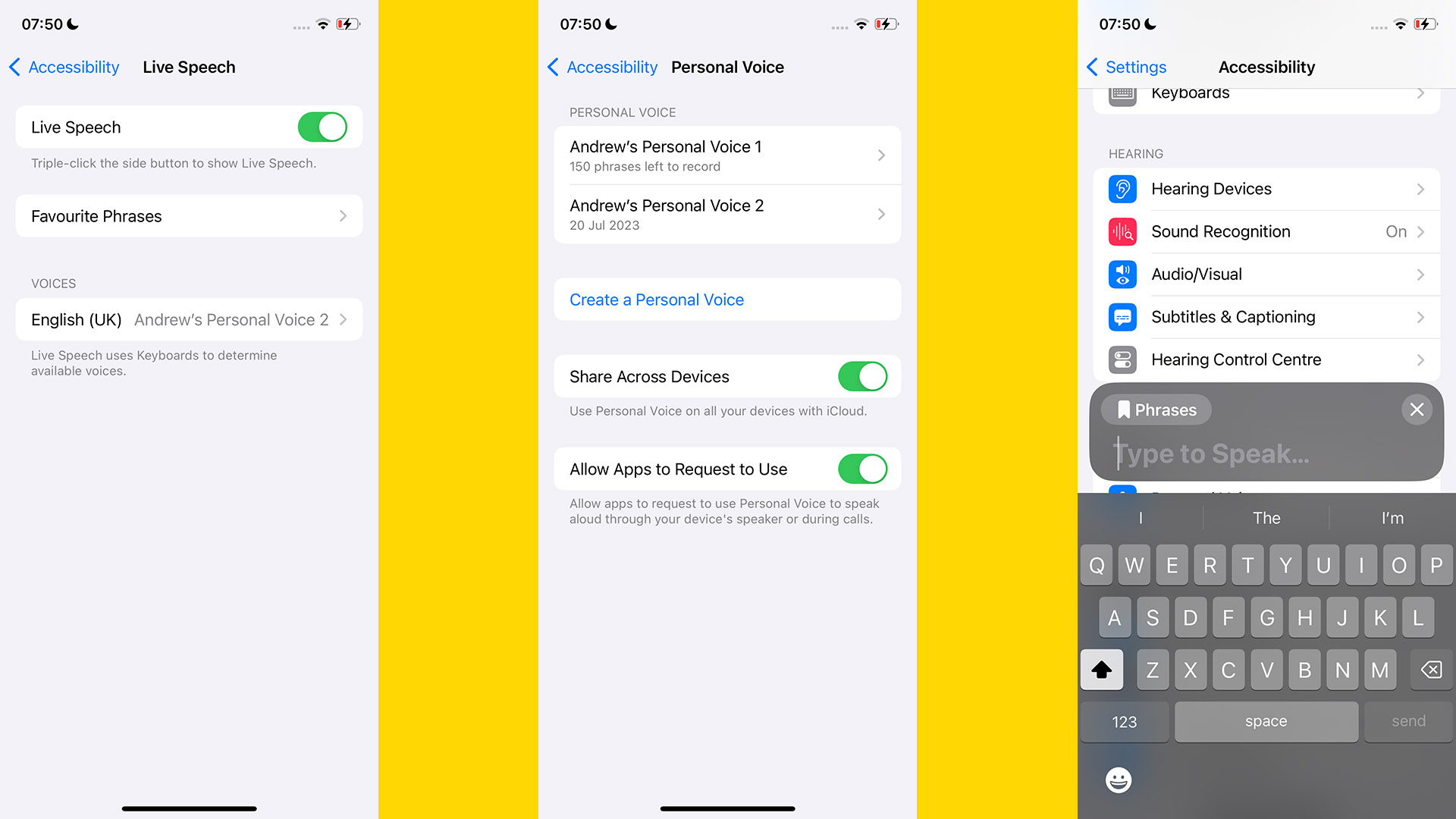

Next, you want to go to Settings > Accessibility > Live Speech and turn Live Speech on using the slider if it is not already enabled.

Now tap Voices. Among the dozens of pre-made voices, you should see an entry for Personal Voice. If you don’t, try restarting your phone. It didn’t appear when I first tested this feature, but it did after a reset.

To actually make your Personal Voice profile speak, just triple-tap the iPhone’s side button. This brings up the text entry box. You can also program -n stock phrases for easy access in Settings > Accessibility > Live Speech > Favorite Phrases.

Any you type in becomes accessible through the Live Speech pop-up menu.

How to set up Personal Voice on iOS 17

- Go to Settings > Accessibility > Personal Voice and tap Create a Personal Voice

- Read out the 150 phases as prompted

- Plug in your iPhone to allow it to process your Personal Voice profile

- When you receive a notification saying Personal Voice is finished, go to Settings > Accessibility > Live Speech. Enable Live Speech, then tap the Voices box and select your Personal Voice profile

- Triple-press the side button to bring up the Live Voice text box to make your Personal Voice speak

Personal Voice: Phrase recording tips

Recording the required 150 phases is going to become a bit of a trial. Apple’s own recommendations include that you try to talk as you would during a normal conversation in a quiet environment. It also suggests you should keep the iPhone 6-12 inches away from your mouth. This will avoid plosive sounds causing any distortion.

Having done this a couple of times now, my advice is you should remember you can always leave a phrase recording session and come back later. The ones you’ve finished are not lost. You can continue where you left off.

Attempting to do the lot in one go may see you get more and more tongue-tied because most of us aren’t voice-over artists.

Also, if you mess up a phrase, the best strategy is to wait for the Personal Voice wizard to jump to the next phrase, then tap the on-screen back button. It seems like you can hit a repeat button during recording, but this doesn’t quite work as intended on the beta.

Is Personal Voice any good?

Apple’s Personal Voice profile nails certain aspects of a person’s voice. Texture, timbre, and certain habits of pronunciation are all represented here.

For example, despite being British rather than American, some of my pronunciations of T sounds end up more like Ds, a classic American trait. It’s apparently called an alveolar tap by the experts. Personal Voice recreated this effect in the profile of my own voice.

However, there are limits. You are unlikely to be able to fool people into thinking they are actually talking to the person on which the profile is based, and at times the profile sounds highly synthetic.

It’s kind of the equivalent of a video game character creator in which you can map a digital image of a real person’s face onto a character. But, obviously, it is far more impressive, given we have had such systems in games for decades. Personal Voice seems genuinely new.

What apps can you use with Personal Voice?

As Personal Voice is currently in beta, we can’t yet be sure of what scope it will eventually have. Apple says you can use it in FaceTime, the phone app, and other apps that use assistive communication tech.

However, it is unclear how deep integration with third-party apps will be possible. Live Voice pops up over other apps, so can naturally be used in apps that pick up all audio from the microphone.

Is Personal Voice on iOS 17 worth using?

Personal Voice is one of the more uplifting uses of machine learning and AI, which at times can seem to spawn things largely intended to maximize profits for companies and reduce required staff. However, it’s the kind of tool you hope you never have to use.

In the darkest use case, you might use Personal Voice to preserve your voice or that of a loved one if they are gradually losing that ability through some degenerative condition.

It could also be useful for those with speech issues or perhaps severe long covid or chronic fatigue sufferers for whom simple conversations can be difficult at times.

Still, Personal Voice is, at present, a simulacrum of a person’s voice. It reminds me of what you often get when you try to compose orchestral music using digital instruments.

No matter how well-sampled a virtual violin is, it won’t sound right unless you also program in the dynamics of the player. The vibrato, the speed of the bow movements, the subtle changes in volume, and the tonal variations related to the pressure of the bow against the strings. Without all that stuff, a programmed-in violin sounds fake.

There are equivalents to all of these in normal speech too, and Apple rather sensibly plays it very safe in Personal Voice in trying to inject these into the synthesized voice. They all hold meaning, and adopting a conservative approach means Apple does not end up inferring meaning that was not intended. But it does mean your Personal Voice still ends up sounding robotic a lot of the time.

Andrew is a freelance contributor who has written about tech and entertainment since 2008, and covered the rise of the iPhone first-hand. Today he writes about audio, fitness tech, computing and TV/film, as well as mobile tech. Publications in his back catalogue include WIRED, Ars Technica, TechRadar, T3, Stuff, What HiFi and Forbes, among others.