Siri, artificial intelligence, and accessibility

A lot has been said recently about Apple's prospects in artificial intelligence and machine learning. One aspect of the discussion that I haven't seen considered is the accessibility ramifications of artificial intelligence in assistants like Siri. (I focus on Siri because it's the AI I have the most experience using.)

I've long been a proponent of voice-driven interfaces as assistive technology, which makes Siri's slow development all the more frustrating. This is particularly true when it comes to Siri understanding you if you have trouble speaking.

The yin and yang of Siri — its obvious accessibility benefits combined with its obvious linguistic shortcomings — has left me feeling torn about its (and AI in general) long-term utility for accessibility.

Accentuating the positive

Siri has the potential to be so much more inclusive and empowering than it is. Nonetheless, I am appreciative of what Siri is capable of presently. So it's worth giving credit where credit is due.

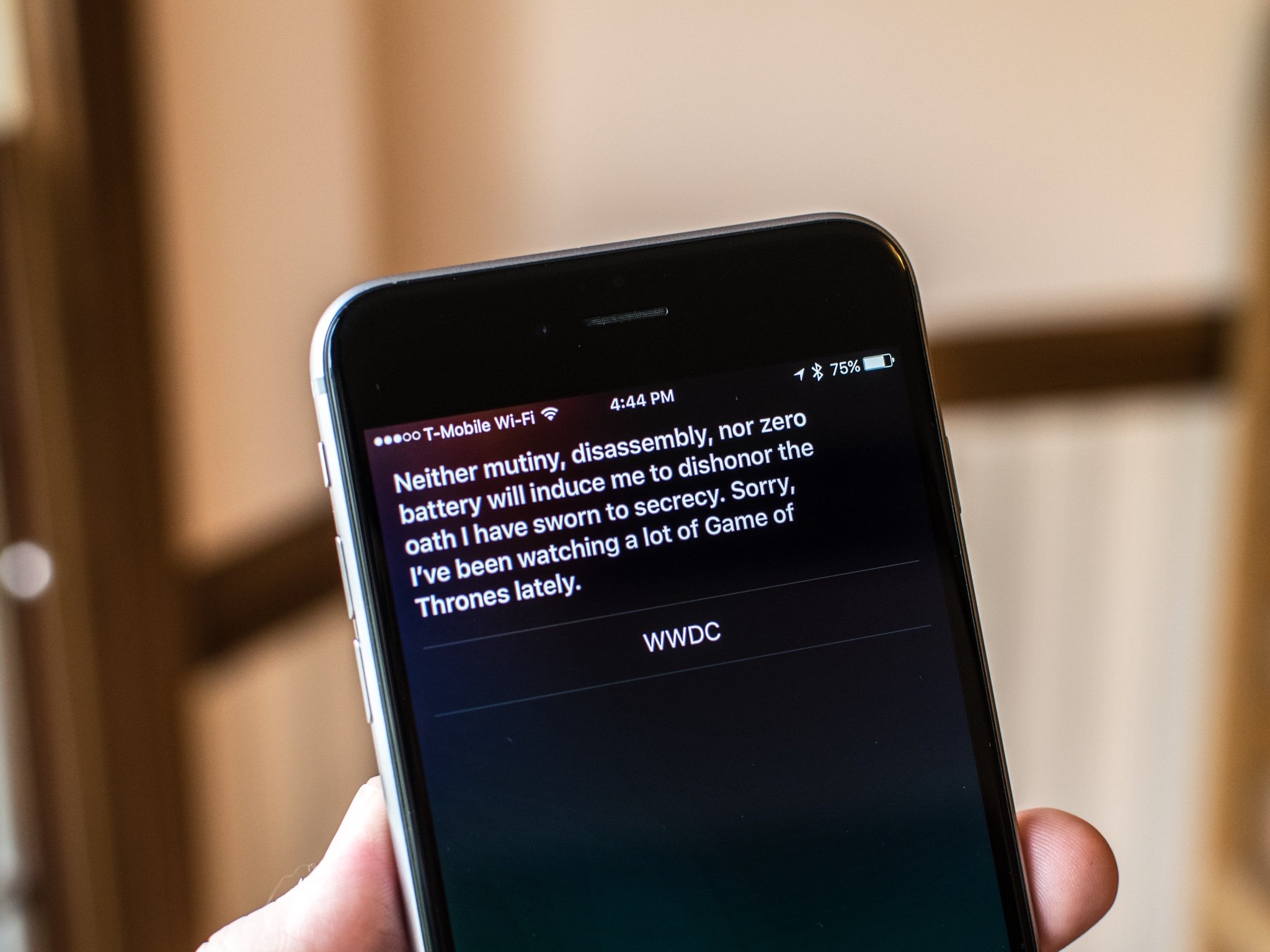

I find myself using Siri more often lately. I ask about the weather, sports scores, and send text messages. Using the "Hey, Siri" prompt is quicker than unlocking my phone and going to, say, the Weather app. Likewise, using only my voice saves my eyes and hands from fatigue. Thus, Siri isn't only a feature of convenience, it's an accessibility tool as well.

I think one reason I'm relying on Siri more is that Siri has gotten faster and (somewhat) more accurate at parsing and completing my requests. I don't have to wait long for Siri to tell me the weather or show me the MLB standings. Granted, these tasks are all things Siri can and should do well.

The bigger reason for using and appreciating Siri is accessibility. This is not to be taken lightly. Siri is truly useful for someone with visual and motor delays, because you can do things by using just your voice.

iMore offers spot-on advice and guidance from our team of experts, with decades of Apple device experience to lean on. Learn more with iMore!

"Sorry, I didn't get that"

Despite my recent uptick in using Siri, I've historically avoided using it due to its inability to handle my speech impediment very well. It's hard enough for Siri to understand normal speech; the problem is exacerbated with a speech delay.

I'm a stutterer, which causes me a lot of social anxiety. It's hard at times to converse with people because of it, out of fear of judgment or shame that I inevitably will stutter. Sometimes I get so nervous that I will talk as little as possible (or avoid it altogether) because of my speech. I share these feelings not to garner pity or sympathy, but rather to explain how stuttering affects me emotionally.

Siri isn't a real person, of course, but the fact of the matter is voice-driven interfaces are built assuming normal fluency. This is to be expected: most people don't stutter, but I (and many others) do, so using Siri can be incredibly frustrating. So, while accuracy has gotten better over time, there's no getting around the fact abnormal speech patterns like mine don't mesh well with Siri. It wreaks havoc on the experience.

With this in mind, I really hope there is something Apple can do to improve its ability to understand non-standard speech. Anecdotally, I've heard from people in the past who say this is nigh impossible given the complexities of language and the limitations of software, but hope springs eternal.

I occasionally use voice search in YouTube's iOS app — YouTube being owned by Google, and Google being renowned for having great speech recognition — and it seems to do a much better job at understanding me. Again, anecdotal evidence, but it gives me hope that Apple can make Siri more adept in this regard. Until then, my only recourse is to slow down when talking while holding the Home button so I won't be interrupted mid-sentence.

A text-based mode for Siri, where you could type commands in lieu of vocalizing them, would alleviate the language barrier for me. The downside, however, is it would negate the gains you get with a hands-free experience.

The ideal scenario is one where you'd have both text input and a fluent voice component. Can Apple deliver that? I sure hope so. The whole premise of Siri is that you talk to it.

Why understanding me matters

You might be reading this article and think to yourself, "Wow, Steven's bemoaning something he said is a hard problem to solve." While it's true that programming a robot to behave more like a human is difficult, to say the least, the reason I'm so passionate about Siri and speech impediments is there are many situations where Siri could be even better at helping people with disabilities.

Consider HomeKit. Sometimes I have trouble with light switches and opening doors because of fine-motor issues caused by my cerebral palsy. While my house has no HomeKit-compatible appliances at the moment, I often think about how cool it would be to be able to tell Siri to turn on the lamp or lock the front door. It would certainly save me a lot of struggle and aggravation in finding the light switch or turning the door lock.

Now consider the new SiriKit announced as part of iOS 10. This is a much-needed (and some would argue, overdue) addition to Siri, and it will help Apple in catching up with the competition when it comes to ride booking, messaging, searching for photos, making payments, placing VoIP calls, and more.

These tasks seem trivial and mundane at first, but even the little things can prove tricky if you have disabilities.

Bottom Line

The majority of the conversation about AI lately has centered around being faster, more aware, and more integrated. Those are all worthy aspirations, but from my perspective, being faster and more integrated mean little without great accessibility. Siri today is serviceable in doing the things I want, but it needs to make a quantum leap for me to wholly embrace the supposed future of computing.

Speech delays are disabilities, too, and I strongly believe Siri's weakness in fully accommodating them is a valid concern.

Apple has said in its last couple earnings calls that services are becoming a bigger part of its business, and they continue to invest in them. In Siri's case, I hope the company has invested heavily in making it more verbally accessible. Nothing would make me happier than to see this demoed at WWDC. Any other capability would be icing on the cake.

I like Siri, I really do. I just want to be able to interact with it better, because it's been a bumpy five years. More importantly, I want Siri to reach its full potential when it comes to accessibility.

Steven is a freelance tech writer who specializes in iOS Accessibility. He also writes at Steven's Blog and co-hosts the @accessibleshow podcast. Lover of sports.