Apple details hand and body pose detection in WWDC 2020 session

iMore offers spot-on advice and guidance from our team of experts, with decades of Apple device experience to lean on. Learn more with iMore!

You are now subscribed

Your newsletter sign-up was successful

What you need to know

- Apple has told developers about its new framework, Vision.

- It can be used to help an app detect body and hand poses in photos and videos.

- It can be used to analyze the movement, and even trigger an action based on a gesture, like taking a photo.

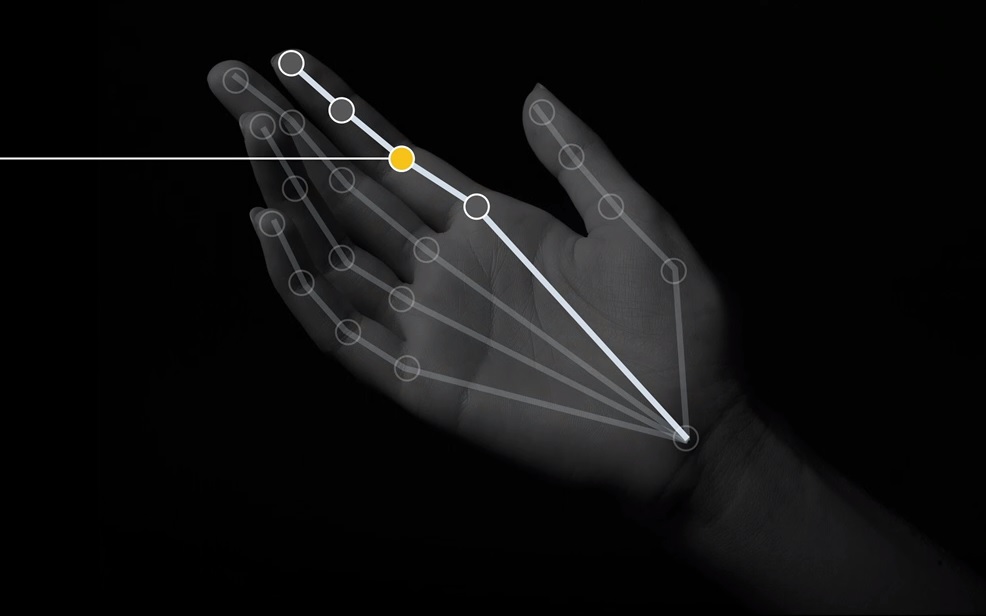

A new Apple developer session for WWDC 2020 has detailed how developers can use Apple's new 'Vision' framework to detect body and hand poses in photos and videos.

Apple is adding a new capability for developers to iOS 14 and macOS Big Sur, which will allow developers to make apps that can detect hand and body poses and movements in videos and photos. From the video summary:

Explore how the Vision framework can help your app detect body and hand poses in photos and video. With pose detection, your app can analyze the poses, movements, and gestures of people to offer new video editing possibilities, or to perform action classification when paired with an action classifier built in Create ML. And we'll show you how you can bring gesture recognition into your app through hand pose, delivering a whole new form of interaction. To understand more about how you might apply body pose for Action Classification, be sure to also watch the "Build an Action Classifier with Create ML" and "Explore the Action & Vision app" sessions. And to learn more about other great features in Vision, check out the "Explore Computer Vision APIs" session.

The technology can be used for apps in fields like sports analysis, classification apps and image analysis, object tracking, and more. Not only can it be used to analyze what's happening on the screen, but it can also be used to detect gestures that then trigger action responses. For example, making a certain pose to a camera with your hand could make your iPhone automatically take a photo. The framework has a few limitations, it doesn't perform as well with people wearing gloves, bending over, being upside down or wearing flowy or robe-like clothing.

iMore offers spot-on advice and guidance from our team of experts, with decades of Apple device experience to lean on. Learn more with iMore!

Stephen Warwick has written about Apple for five years at iMore and previously elsewhere. He covers all of iMore's latest breaking news regarding all of Apple's products and services, both hardware and software. Stephen has interviewed industry experts in a range of fields including finance, litigation, security, and more. He also specializes in curating and reviewing audio hardware and has experience beyond journalism in sound engineering, production, and design.

Before becoming a writer Stephen studied Ancient History at University and also worked at Apple for more than two years. Stephen is also a host on the iMore show, a weekly podcast recorded live that discusses the latest in breaking Apple news, as well as featuring fun trivia about all things Apple. Follow him on Twitter @stephenwarwick9