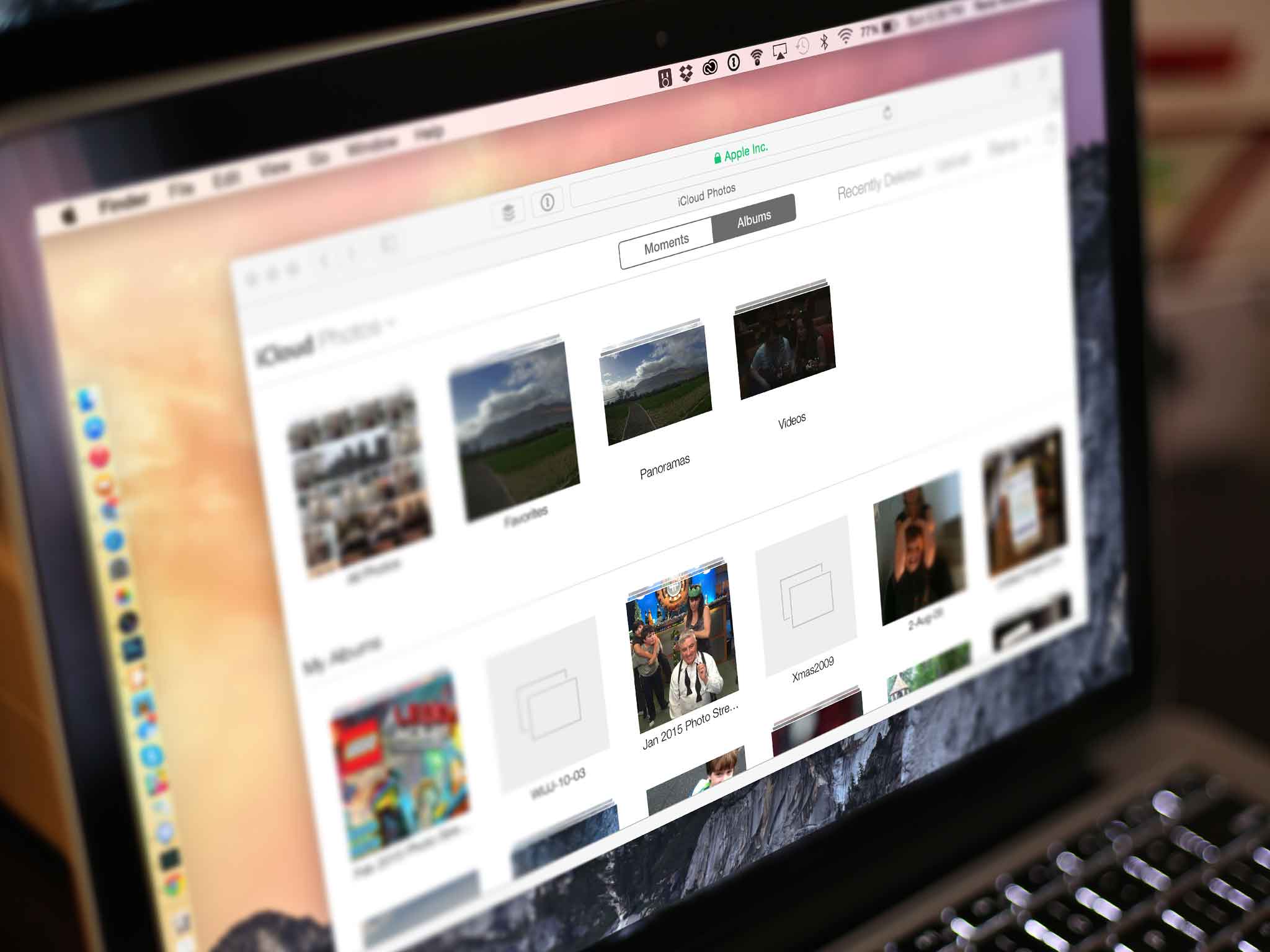

Apple says it's scanning photos to weed out child abusers

What you need to know

- Jane Horvath, Apple's chief privacy officer spoke at CES yesterday.

- Horvath said Apple uses technology to screen for specific photos.

- Images found are "reported."

This story has been updated to reflect a change made to the original story from The Telegraph. From the source article: "This story originally said Apple screens photos when they are uploaded to iCloud, Apple's cloud storage service. Ms Horvath and Apple's disclaimer did not mention iCloud, and the company has not specified how it screens material, saying this information could help criminals."

Jane Horvath, Apple's Senior Director of Global Privacy, spoke during a CES panel yesterday and confirmed that Apple scans photos to ensure that they don't contain anything illegal.

The Telegraph reports:

Apple scans photos to check for child sexual abuse images, an executive has said, as tech companies come under pressure to do more to tackle the crime. Jane Horvath, Apple's chief privacy officer, said at a tech conference that the company uses screening technology to look for the illegal images. The company says it disables accounts if Apple finds evidence of child exploitation material, although it does not specify how it discovers it.

Apple updated its privacy policy last year, but it isn't clear exactly when Apple started scanning images to ensure they are above board. It does have a web page specifically dedicated to child safety, too.

Apple is dedicated to protecting children throughout our ecosystem wherever our products are used, and we continue to support innovation in this space. We have developed robust protections at all levels of our software platform and throughout our supply chain. As part of this commitment, Apple uses image matching technology to help find and report child exploitation. Much like spam filters in email, our systems use electronic signatures to find suspected child exploitation. We validate each match with individual review. Accounts with child exploitation content violate our terms and conditions of service, and any accounts we find with this material will be disabled.

While there will surely be people who have an issue with this, Apple isn't the first company to scan images in this way. Many companies use software called PhotoDNA – a solution that was specifically designed to help prevent child exploitation.

"By working collaboratively with industry and sharing PhotoDNA technology, we're continuing the fight to help protect children." – Courtney Gregoire, Assistant General Counsel, Microsoft Digital Crimes Unit

Now that's surely something we can all agree with.

iMore offers spot-on advice and guidance from our team of experts, with decades of Apple device experience to lean on. Learn more with iMore!

Oliver Haslam has written about Apple and the wider technology business for more than a decade with bylines on How-To Geek, PC Mag, iDownloadBlog, and many more. He has also been published in print for Macworld, including cover stories. At iMore, Oliver is involved in daily news coverage and, not being short of opinions, has been known to 'explain' those thoughts in more detail, too.

Having grown up using PCs and spending far too much money on graphics card and flashy RAM, Oliver switched to the Mac with a G5 iMac and hasn't looked back. Since then he's seen the growth of the smartphone world, backed by iPhone, and new product categories come and go. Current expertise includes iOS, macOS, streaming services, and pretty much anything that has a battery or plugs into a wall. Oliver also covers mobile gaming for iMore, with Apple Arcade a particular focus. He's been gaming since the Atari 2600 days and still struggles to comprehend the fact he can play console quality titles on his pocket computer.