Latest about Twitter

Your X posts could be training its Grok AI - here's how to opt out

By Lloyd Coombes published

If you're using X (formerly Twitter), your data could be being used for training its AI model, Grok.

X opening random links every time you click on a post? It’s not just you — why isn’t everyone talking about this wild bug?

By Tammy Rogers published

If you click on an image in X's iPhone app, there's a chance you'll find yourself taken to a completely unrelated webpage — what is going on?

Elon Musk announces X update he claims FaceTime can't match

By John-Anthony Disotto published

X is getting video and audio calls with a "unique" combination of features.

Looks like living near Twitter X is now even worse than using Twitter X

By Stephen Warwick published

Twitter is under investigation over a massive strobe sign affixed to the top of its HQ displaying its new X logo.

Twitter gets its 'X' iPhone name change, App Store rules be damned

By Oliver Haslam published

App Store rules looked set to block Twitter’s rebrand to X on iPhone, but it just happened anyway.

Twitter may not get to change iOS app name to 'X' due to Apple's App Store rules

By Palash Volvoikar published

Twitter's rebrand to X is facing another setback as it's unable to rename the iOS app to X due to Apple's App Store rules.

Twitter introduces rate limits, locking users out of the site

By Palash Volvoikar published

Twitter has introduced rate limits, restricting users to viewing a numbered amount of tweets per day, leading to widespread backlash.

Elon Musk sets his sights on iPhone's Facetime feature with Twitter audio and video calls

By John-Anthony Disotto published

Elon Musk has announced voice and video calls for Twitter in an attempt to take on Apple's FaceTime.

Twitter's blue checkmark farce is hilarious (and borderline illegal)

By Oliver Haslam published

Twitter’s handing out blue checkmarks like they’re candy right now, upsetting just about everyone — including those in receipt of them.

Twitter is about to take everyone's blue checkmarks away, unless you pay for one

By Stephen Warwick published

Twitter is going to remove all legacy verified checkmarks starting April 1, meaning the only way to get verified on Twitter is to pay for it.

Elon Musk broke Twitter because Joe Biden got more likes than him

By Stephen Warwick published

A new inside report has revealed Elon Musk flew straight to Twitter HQ from the Super Bowl, demanding engineers fix his engagement after a tweet by Joe Biden got more likes than his.

Here's why everyone, even Elon Musk, is locking their Twitter accounts

By Stephen Warwick published

Elon Musk and others are locking their Twitter accounts to private in order to test a circulating theory that making your account private drastically improved engagement.

Twitter offers weak-sauce response to blocking third-party apps

By Oliver Haslam published

Twitter has confirmed it is "enforcing its long-standing API rules" about third-party APIs like Tweetbot.

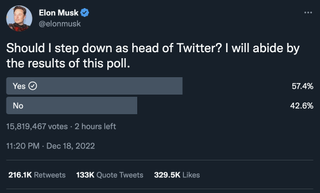

Elon Musk confirms he will resign as Twitter CEO (but on his terms)

By Palash Volvoikar published

Elon Musk has confirmed that he will resign as Twitter CEO, following a loss on a Twitter poll about the decision.

Elon Musk is actively looking for a new Twitter CEO, says report

By Palash Volvoikar published

Elon Musk is actively looking for a new Twitter CEO after losing a Twitter poll asking if he should stop heading the social media company.

Elon Musk says he could step down from Twitter following shock poll and controversial linking policy debacle

By Stephen Warwick published

Elon Musk might be about to step down as head of Twitter, following a poll in which nearly 60% of respondents said he should give up the role.

Apple has "fully resumed" advertising on Twitter, says Musk

By Palash Volvoikar published

Apple has fully resumed advertising on Twitter, said Elon Musk in a recently-held Twitter Spaces conversation.

How to download all of your old Tweets and archive them

By Stephen Warwick published

Twitter might be dying, but here's how to save your memories.

iMore offers spot-on advice and guidance from our team of experts, with decades of Apple device experience to lean on. Learn more with iMore!