TrueDepth vs. back camera: Which iPhone X portrait mode is better?

iMore offers spot-on advice and guidance from our team of experts, with decades of Apple device experience to lean on. Learn more with iMore!

You are now subscribed

Your newsletter sign-up was successful

When I first had a chance to play with the iPhone X's TrueDepth front-facing camera system during the last iPhone event, I left Steve Jobs Theater with cameras on the brain.

True Depth vs. Dual Lens

Thanks to physics, it's highly unlikely we'll ever see true shallow depth of field on an iPhone — there's just not enough room in the casing for a proper lens. But Apple (and its competitors) have been hard at work building effective simulations for various "bokeh" depth of field effects.

On the iPhone X, Apple has implemented it in two distinct ways: On the front of the iPhone X, the TrueDepth's various IR and dot sensors help measure depth, while the dual-lens rear camera system estimates depth by using the two lenses and machine learning.

Both techniques make up Apple's "Portrait mode," but they're using very different technology to achieve the same result. When you use the TrueDepth system to take a selfie, the iPhone X is creating a depth map using the system's IR sensors. The dual lens system, in contrast, measures depth by looking at the difference in distance between what the wide-angle lens sees and what the telephoto sees, creating a multi-point depth map from that data.

The difference between the two is all in the collection of data: Once your iPhone X has that information, it's processed in similar ways by the on-board image signal processor (ISP) and turned into depth of field information, producing artificial foreground and background blurs around your subject.

The fight for focus

Even if it was primarily developed for Face ID, the TrueDepth system is arguably a huge leap forward in depth mapping and camera technology. So I got curious: Could the TrueDepth-powered front camera take better depth of field photos than the rear camera?

To test this question, I took my iPhone X on a short photo adventure, attempting to snap identical photos with the front-facing and rear-facing cameras.

iMore offers spot-on advice and guidance from our team of experts, with decades of Apple device experience to lean on. Learn more with iMore!

There are some caveats in this test, of course: With different focal lengths and apertures (2.87 and ƒ/2.2 for the front-facing camera, vs. 6 and ƒ/2.4 for the rear-facing) framing the exact picture was often tricky; in addition, the ISP was much more reticent to create a depth map for non-human subjects on the front-facing camera — I often had to shoot significantly wider on the front-facing camera to get it to enable Portrait mode.

The results are fascinating: On photos with faces in them, the front-facing camera captures an astounding amount of close-up depth, and uses it to create much more natural looking fades and depth of field effects around the subject.

While both are nice portraits, the front-facing (left) portrait more naturally handles hair against bright light and the complicated mapping of the tree behind. The portrait from the rear camera (right) more directly cuts out the subject from its background.

Retina Flash photo from front-facing camera (left) vs TrueTone Flash from rear-facing camera (right).

But once we leave the realm of true portrait photography, the front-facing camera suffers somewhat — in part, I suspect, because of its calibration. TrueDepth wasn't trained on finding the depth on a glass or pumpkin; its strength is in face mapping. In contrast, I'd wager that because of the rear-facing camera's rudimentary depth system, it's better able to simulate depth on a wider range of objects — even if it doesn't always make those objects look perfect.

With a feline subject, the front-facing camera (left) still does a pretty good job making the fade look natural, though it slightly blurs the left ear. In contrast, the rear camera's photo (right) has a much sharper edge on the cat's head nearer to bright light.

In brighter light, the difference between the front (left) and rear (right) cameras is even more pronounced: The fur fade looks much more natural, though the back camera's superior sensor gets the better focus on a moving cat's face.

Inanimate objects are where the front camera (left) really starts to struggle. While the leaf fades look more natural, it doesn't know what to do with the flower or its stem.

Headphones with the TrueDepth (left) vs the rear camera (right).

Both the front (left) and rear (right) cameras had significant difficulty in trying to figure out the depth of a pumpkin stem in low light, though the front camera does get a nicer fade on the pumpkin's backside.

When shooting a wine glass in low light, the TrueDepth camera (left) gave up: I couldn't get it to enter portrait mode no matter what I did. The rear camera (right) did get the glass, though… partially. Focusing on glass is hard.

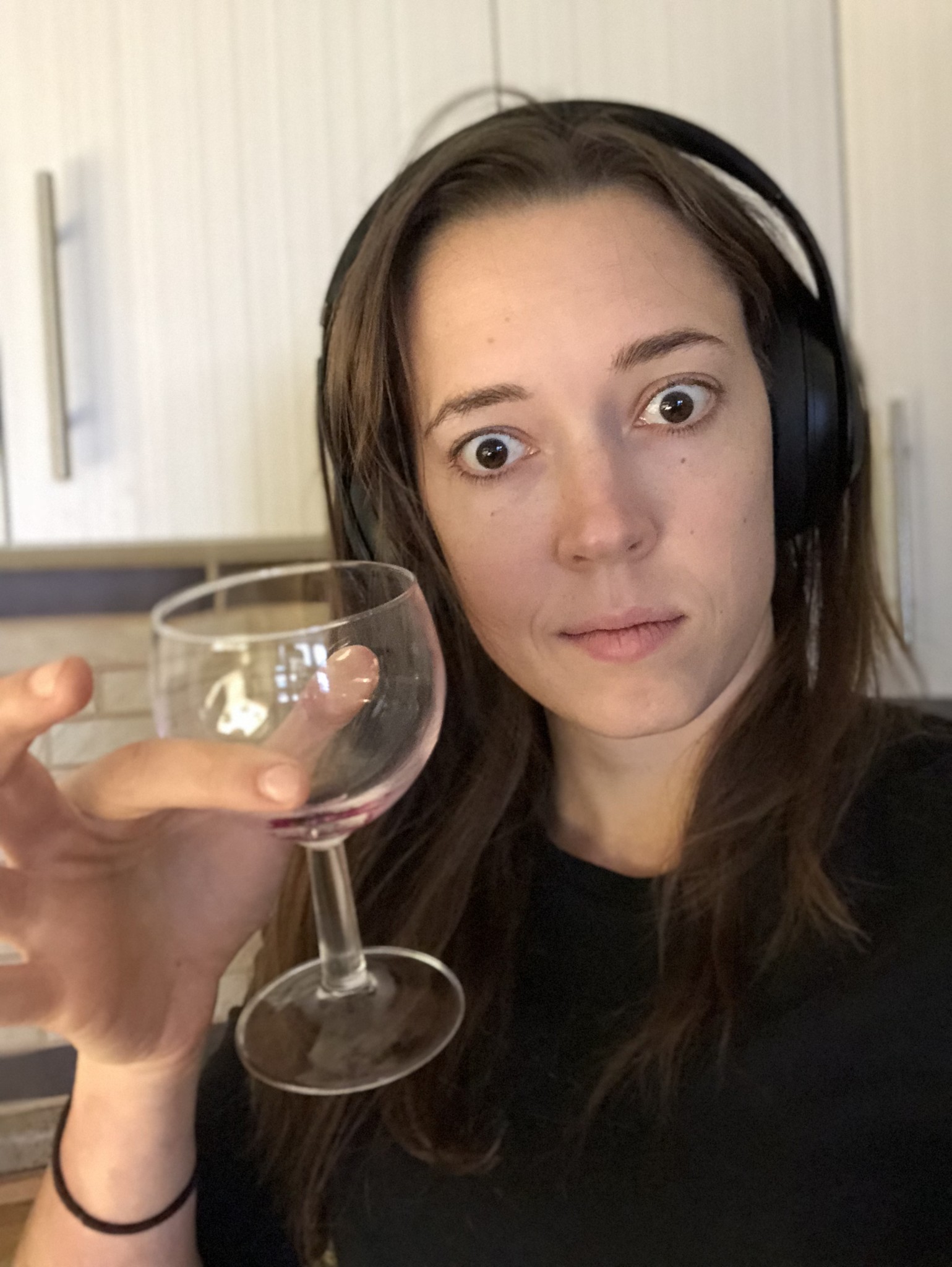

It took holding the wine glass before the front camera (left) would recognize it as a subject. Even then, it doesn't do a perfect job, but it does look more natural than the half-blur of the rear camera (right).

A tantalizing future

While I'm not recommending anyone abandon their rear camera setup to solely shoot with the TrueDepth system, this test does make me incredibly excited for the future of Apple's cameras. Right now, the TrueDepth sensors are too expensive to throw in the rear-facing cameras, but as component costs go down, I wouldn't be surprised to see variations on these sensors make their way into future iPhone camera kits.

And that could open the door for a lot of spectacular photography. We've already seen Snapchat and Clips playing around with the depth map on the front-facing camera; with TrueDepth built into the rear cameras (and paired with the kind of machine learning smarts Google has been experimenting with in the Pixel 2), the possibilities are as exciting as the tech itself.

We could get Portrait mode for video, background substitutions, smarter Slow Sync Flash and HDR technology, better Portrait Lighting, live-mapped Animoji — not to mention the AR possibilities when you have an intelligent depth sensor on the back of your smartphone.

Serenity was formerly the Managing Editor at iMore, and now works for Apple. She's been talking, writing about, and tinkering with Apple products since she was old enough to double-click. In her spare time, she sketches, sings, and in her secret superhero life, plays roller derby. Follow her on Twitter @settern.