iOS 11 helps you shoot photos straight from the heart

A funny thing happened when I picked up my iPhone 7 Plus back in September of 2016. I put down my Canon 5D Mark II. And I haven't picked it up since. It's not that the camera in my pocket has a sensor as light-sensitive or lenses anywhere nearly as flexible and powerful. But, thanks to the Portrait Mode and optical zoom enabled by its dual camera system and image signal processor, it can take photos every bit as meaningful and emotional.

And, with iOS 11, it's about to get even better.

Years ago, the cliche was Nokia had the glass, Apple had the silicon, and Google had the servers. In other words, a Lumia could capture great images, Apple could calculate great images, and since Google never new what hardware was available on any given device, they'd just suck everything up to the cloud and make the best they could from it there.

Now, though, Apple is fielding fusion lenses and doing local computational photography, where machine learning, computer vision, and a lot of smart programming start to produce images beyond what any one of those processes could do alone. (And since it's done on-device, you don't have to give up all your private photos to Apple just to reap the benefits of the processing — your valuable data stays yours.)

Portrait Mode Plus

Portrait Mode on iPhone 7 Plus is the first mass-market application of fusion and computational photography that I'm aware of. On iOS 10, it came with fairly strict lighting requirements. On iOS 11, though, better optical and software-based stabilization, and shoots with high dynamic range (HDR), which dramatically improves low-light and high contrast performance. For extreme low-light situations, iOS 11 will even let you do Portrait Mode with the LED flash.

Noise is still an issue, but Apple does a lot with the "grain" to mitigate it. So much so that I often don't notice the noise at all at first — I'm too busy marveling at the subject.

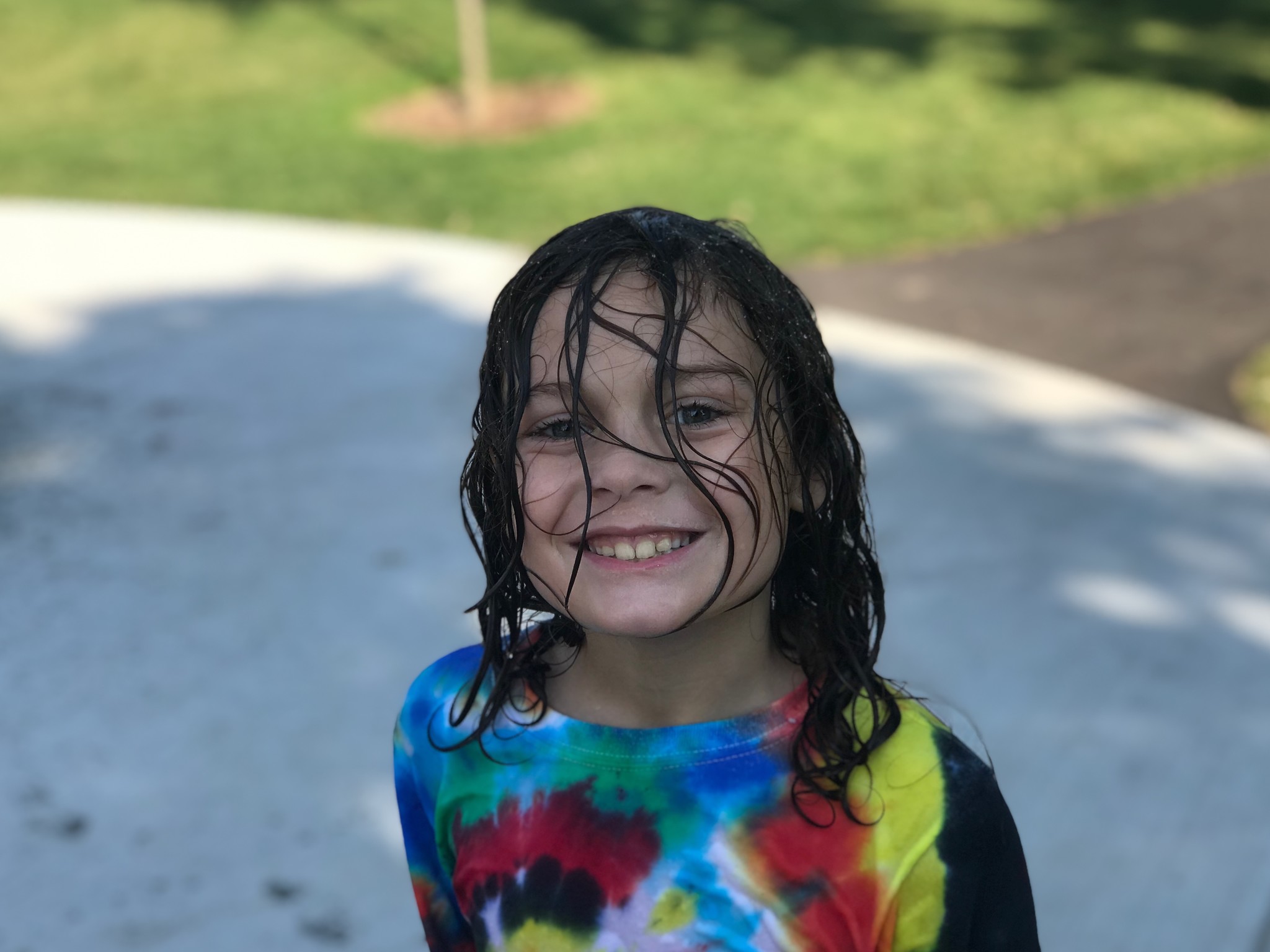

It's more than just faces now as well. Though, obviously, "portrait" is in the name, since launch you could also capture flowers, coffee cups (so many, sorry!), and more. Now it feels like it's working even better. For example, grabbing onto a chain link fence and blurring the background behind it.

iMore offers spot-on advice and guidance from our team of experts, with decades of Apple device experience to lean on. Learn more with iMore!

Apple has also switched from the ancient JPEG (joint photographic experts group) format to the new HEIF (high-efficiency image format) in iOS 11. The efficiency in the name works out to about 50% space-savings over JPG in your library. It comes at the expense of slightly longer encode times but nothing in life, and certainly nothing in imaging, is free. For photos on iPhone, though, the process is already so fast I've never noticed a difference.

What's even cooler about HEIF is that it can stores multiple image assets in the same container.

For example: In iOS 10, when you shot on an iPhone 7 Plus in Portrait mode, the Camera app would spit out two images — one normal, and one with the depth effect burned in.

With HEIF, the depth data for Portrait Mode is retained but bundled into the same file. (HDR data, which is taken from multiple exposures, starts being processed at the ISP-level in the chipset, so that's burned in before it can be bundled into HEIF.)

The advantage to that is most apparent in photo editing, where filters can now apply different effects based on the depth or motion data. Not just on iPhone 7 Plus either. As long as the effect information is bundled into the HEIF, iPad and Mac can get every bit as "depthy" with it.

So, for example, the new filters in Camera and Photos, can apply different shades and tones based on the depth data in the photo.

Those filters have been rethought not to duplicate what you typically see on social networks but what you'd find in more classic photography: Vivid, Vivid Warm, or Vivid Cool, which play on vibrancy; Dramatic, Dramatic Warm, or Dramatic Cool, which toy with contrast, and Silvertone which rounds out the previous Mono and Noir filters with something a little more high-key.

Silvertone is probably my favorite of the new "depthy" bunch.

Livelier Photos

Apple's been steadily improving Live Photos as well over the last couple of years. First and foremost, the quality you get from the 1.5 second before and after animations are much-improved. So much so that Apple can start to offer some really cool new effect options.

Namely, Loop, Bounce, and Long Exposure.

Loop takes the 3 seconds of Live Photo animation and fades from end back to beginning, so the video plays over and over and over again, in an endless cycle.

Bounce takes the animation, plays it forward, then plays it back, like a perpetual ricochet.

Long exposure takes the animation and shows all frames at once, so motion blurs and light stretches out across the frame.

HEIF also works with Live Photos. Instead of a separate JPG and MOV (movie) file, you now have both the still/key photo and the video bundled into one file. That means those effects are also non-destructive, and you can go from bounce to loop and back again any time you like.

In all cases, the effects are intelligent and try to lock position on static elements so the moving elements become even more dynamic in contrast.

Sure, these types of effects have been available in apps like Instagram and Snapchat for a while, but they were also stuck in those networks. If I wanted to make a fun bounce, I had to do it in Instagram and either share it with everyone or with friends on Instagram.

By adding effects into Live Photos, I can share them outside of my social networks — including with family and friends that want no part of the Facebook or Snapchat scenes.

That bounce of my friend and I tapping champagne glasses at my birthday? That went straight to them over iMessage. Securely. Privately. Not for the world or for the giant data harvesting companies. Just for us.

And, come iOS 11, you'll be able to do that with any not-for-networks photo now too.

Three screens, no waiting

There's a lot more coming to Camara and Photos in iOS 11 as well. QR scanning, for example, will let you quickly capture and act on codes. Memories, which was a surprise hit with mainstream users last year, is getting several new types, including: pets, babies, birthdays, sportsball, outdoor activities, driving, night life, performances, anniversaries, weddings, "over the years" (aka "this is your life", "early memories" (aka "glory days"), visits, gatherings, and the one that scares and delights me almost as much as pets — meals.

There's even a stealthy level that fades in when you've got Grid enabled and you go to take a top-down photo of your food, coffee, everyday carry, etc. It's a glorious touch that helps the already composition-obsessed absolutely nail the shot.

Apple's still keeping manual controls, and more interesting explorations of depth effect, for example, for developers and third party apps, but that's also helping keep Camera and Photos focused, which is a good thing. Apple's also sticking with its mission to capture natural, true-to-life photos. The camera app isn't pre-crushing the blacks and boosting the saturation. It's letting you choose to do that on your own afterwards, if and how you really want to.

That's where the editing features come in, which I'll cover in my next article. You can shoot good photos on iPad Pro now, but you can edit fantastic photos across iPhone, iPad, and Mac. That means, whether you're on the go, easing back on the sofa, or at full-attention at your desk, the same tools are available on the same photos, ready and waiting for you to work and play.

Taken together, it continues Apple's relentless drive to help more people capture better photos and preserve important memories under a wider range of conditions than ever before.

iOS 11, a free update coming this fall, won't give you a photographers eye or experience, but it will maximize what you can do with your eyes and experience. It won't magically give you the sensor or glass of a high-end DSLR, but it will give you the feeling you get from taking photos with one, at least at a small, intimate scale.

It makes everyday photography a little less everyday. And, when it comes to a modern pocket camera, that's exactly the type of feeling you want.

Rene Ritchie is one of the most respected Apple analysts in the business, reaching a combined audience of over 40 million readers a month. His YouTube channel, Vector, has over 90 thousand subscribers and 14 million views and his podcasts, including Debug, have been downloaded over 20 million times. He also regularly co-hosts MacBreak Weekly for the TWiT network and co-hosted CES Live! and Talk Mobile. Based in Montreal, Rene is a former director of product marketing, web developer, and graphic designer. He's authored several books and appeared on numerous television and radio segments to discuss Apple and the technology industry. When not working, he likes to cook, grapple, and spend time with his friends and family.